Here is the story of how I crashed my own server, successfully engineered my way out of it, and cut execution time from 2.5 seconds to 250ms.

— Me, moments before disaster

1The Naive Approach

My initial architecture was simple (and efficient... or so I thought):

- User sends code via API.

- API spins up a brand new Docker container.

- Code runs, output is captured.

- Container is destroyed.

It worked beautifully for local testing. I felt like a genius.

The Crash 💥

Then, I decided to run a stress test. I fired up Apache Benchmark and sent 1,000 concurrent requests.

Result: System Freeze

The kernel panicked trying to spin up 1,000 Docker containers simultaneously. The CPU usage hit 100%, memory was swallowed whole, and requests started timing out left and right.

Lesson Learned: Unbounded concurrency is a death sentence. You cannot simply "spin up" resources on demand at scale. You need backpressure.

2Why Not Just Run Synchronously?

You might ask: "Why queue at all? Why not just execute and return?"

🚫 Blocked Connections

If execution takes 2 seconds, that HTTP connection is open for 2 seconds. With 1,000 users, you exhaust file descriptors instantly.

🌊 No Backpressure

If traffic spikes to 5x capacity, a synchronous server crashes immediately. An async server just has a longer queue.

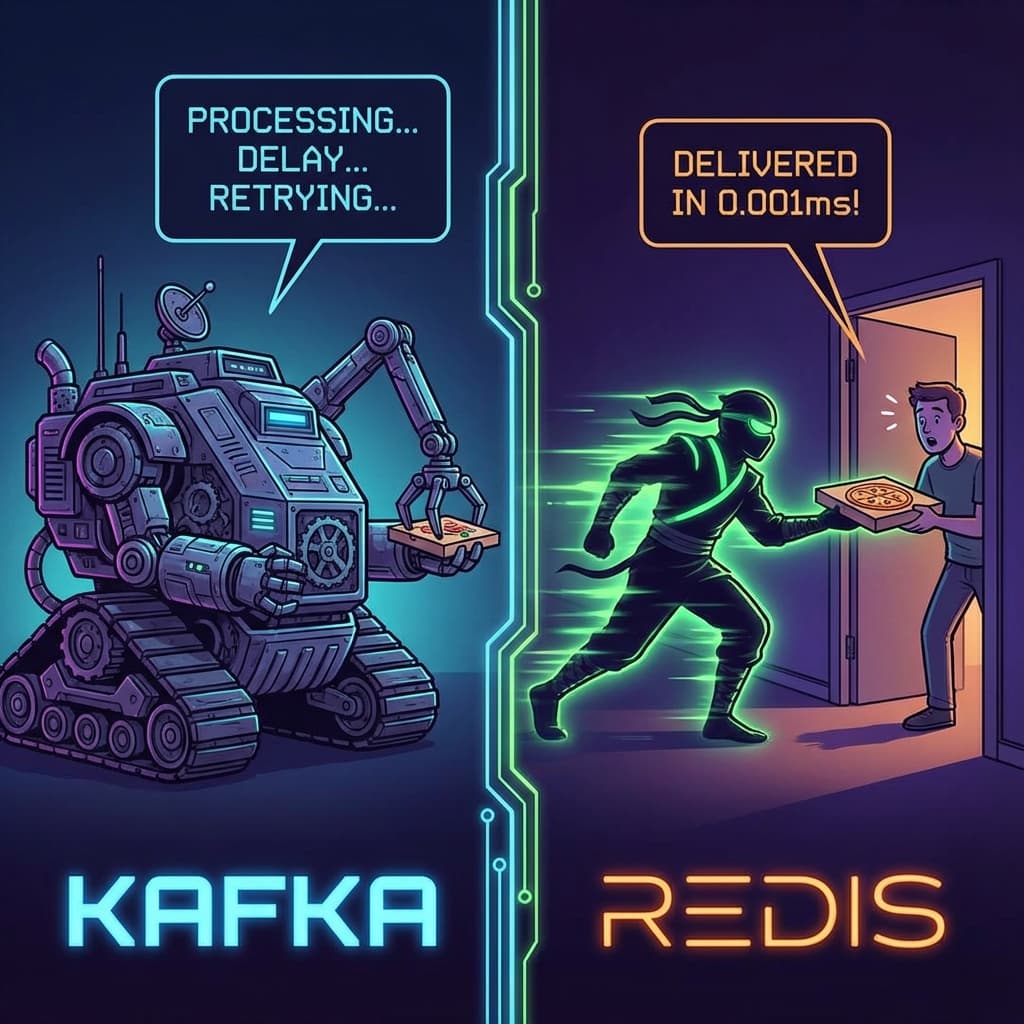

3The Queue: Kafka vs. Redis

Attempt A: Apache Kafka

My first instinct was "Enterprise Scale™".

- Handled throughput easily

- 700ms - 1000ms overhead just to queue

Attempt B: Redis Streams

I stripped out Kafka and implemented Redis Streams.

4Scaling the Execution Core

Finally, the bottleneck moved to the worker. I tried three strategies:

Attempt 1: Spin Up On Demand

FAILEDTried to boot 1,000 OS processes at once. Kernel panic. Server melted.

Attempt 2: Capped Concurrency

TOO SLOWLimited to 50 workers. Safe for server, but created 50-second wait times for users during spikes.

✨ Attempt 3: The "Pre-Warmed" Pool

SOLVEDTreat containers like database connections. Boot 50 containers before traffic hits. Pause them. When a job comes, unpause an existing one.

Handling "Dirty" State

Reusing containers introduces state pollution. We solved this with a strict health policy:

- Isolation: Containers are locked down (no network, limited disk).

- Dirty Checks: If a container returns TLE or OOM, it is marked "Dirty" and destroyed immediately.

- Freshness: Periodic health checks ensure the pool never goes stale.